Years ago, I decided to accept a position that led to me transitioning from a high-volume industry with frequent product changeover and intense market forces to a low-volume industry with greatly reduced connectivity to market dynamics. My boss at the time provided me a bit of perspective and advice that has stuck with me over the years.

My boss at the time suggested that the industry that I was moving to had elements that would frustrate me and that would be difficult for me to navigate based on how I tend to approach things. He believed that the lack of frequent unambiguous feedback in the new industry would enable organizational drift that would allow organizational dysfunction to develop largely unchecked. He thought that resulting organizational dynamics would be frustrating for someone that was focused on products, product development, and product performance.

In hindsight, I realize that this idea about my new opportunity is related to an application of an observation he had made about three different engineering groups, A, B, and C and has deep roots in control systems ideas. For the three engineering groups, he contended “everyone knows that ‘A” is the highest performing engineering group.” I nodded in agreement. He then contended “everyone knows that ‘B’ is the next highest performing engineering group.” I nodded in agreement. He then contended “everyone knows that ‘C’ is the lowest performing engineering group.” Again, I nodded in agreement. My boss then asked, “why do you think that is?” I shrugged. At that time, it was early in my career, and I didn’t have a lot of basis on which to make a suggestion. He then shared his theory “In ‘A,’ you can define what good is, and you can measure it. In ‘B,’ you can define what good is, but you can’t measure it. In ‘C,’ you can’t define good and you can’t measure it.”

Today, with the benefit of much more experience with organizational systems and physical control systems, I can see the parallels that my boss was pointing out to me. Those parallels, in my view, are deep and offer a useful way to think about organizational dynamics using some basic principles of control system performance. What does it mean if you can’t define good? What does it mean when you can’t measure it?

In their simplest form, feedback control systems are about measuring the actual state, comparing the measurement to the target outcome, and then applying a correction to move the actual state towards the target state. In state-space based control systems, there are two principles that directly apply to the organizational dynamics, observability and controllability. At its core, observability is a mathematical definition of whether or not the dynamics of states can be seen in the measurements that you are making. Controllability is about whether or not the states of the system can be controlled by applying forces at different locations.

In real systems, there are multiple ways in which the way the real system behaves challenges the control system. In a previous note, I wrote about lags and noise. In this note, I am going to write about dead bands and quantization. Both have analogs to organizational dynamics, and both have analogs to the dynamics of failure that Henry Petroski discussed.

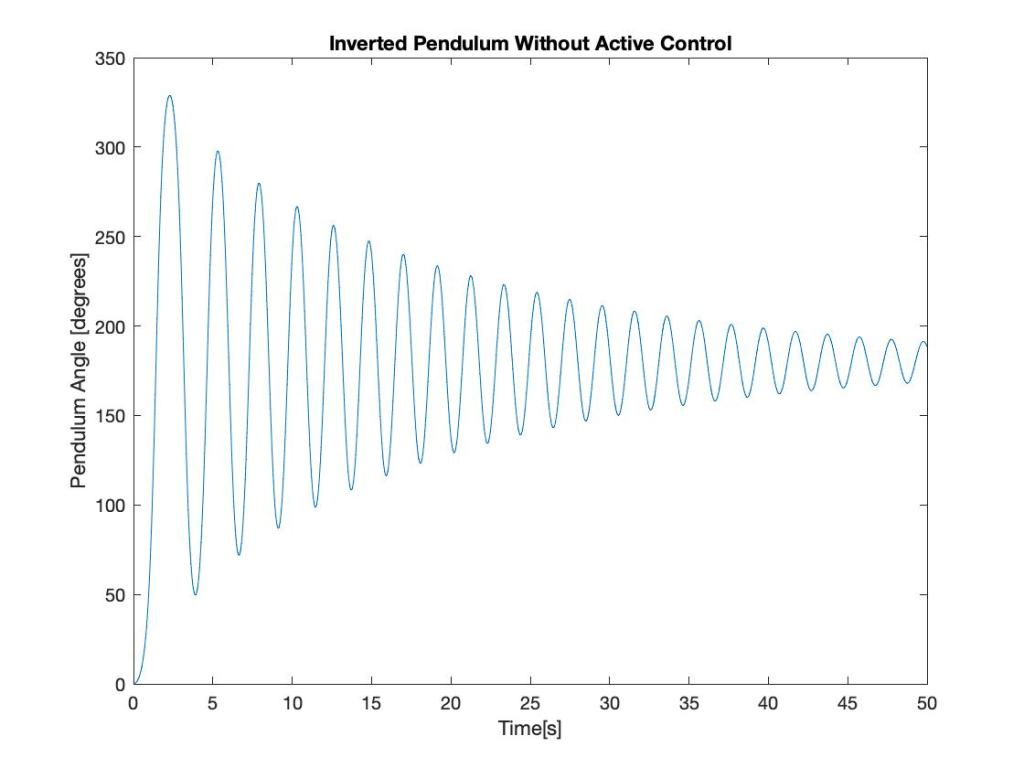

For illustrative purposes, I will use the inverted pendulum example. The first picture shows how the system would behave if the pendulum were bumped when it is balanced vertically.

Figure 1: Inverted Pendulum without Active Control

In this case, the instability of the system is obvious. The inverted pendulum swings around and settles at exactly the opposite of the desired point. The next figure shows how the system behaves when a highly effective control system is put in place.

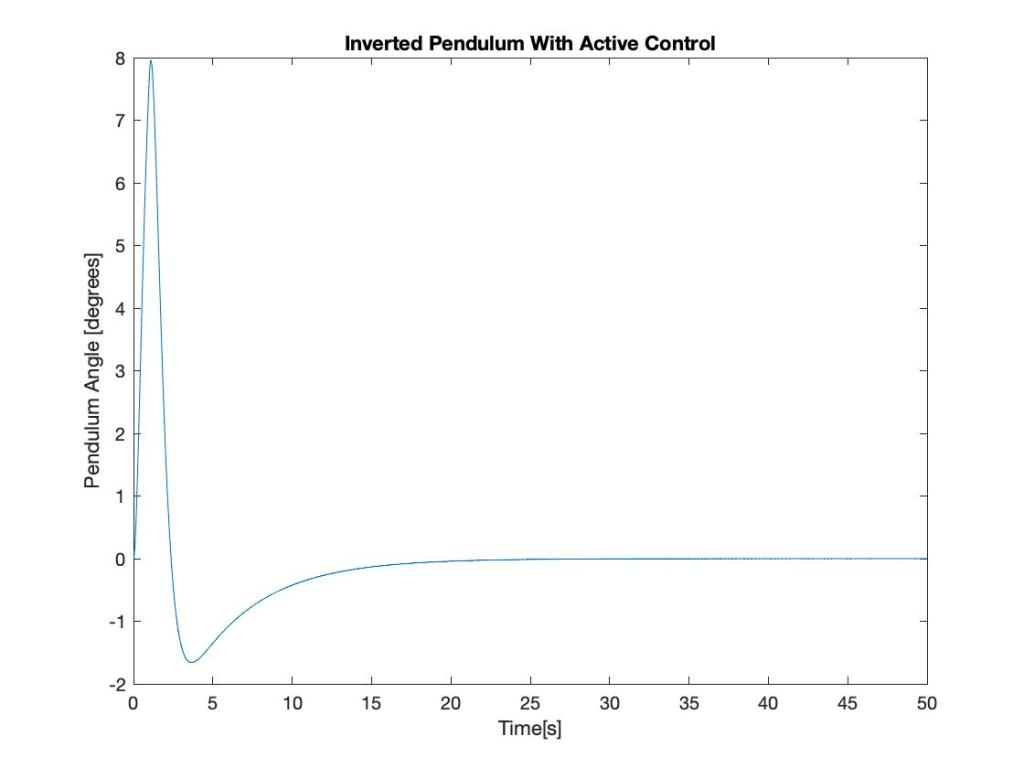

Figure 2: Inverted Pendulum with Active Control Enabled

In this case, the control system quickly recovers after the system is bumped and maintains the position at the desired location. This simulation assumed that the controller had perfect knowledge of the location of the pendulum, did not have any delays in how it applied corrective action, and did not have any measurement delays. In real systems and in real organizations, these assumptions are often not justified. The next figure shows the effect of not being able to precisely measure the location of the pendulum. The measurement is still accurate, but there is a limitation to the precision with which it can be measured.

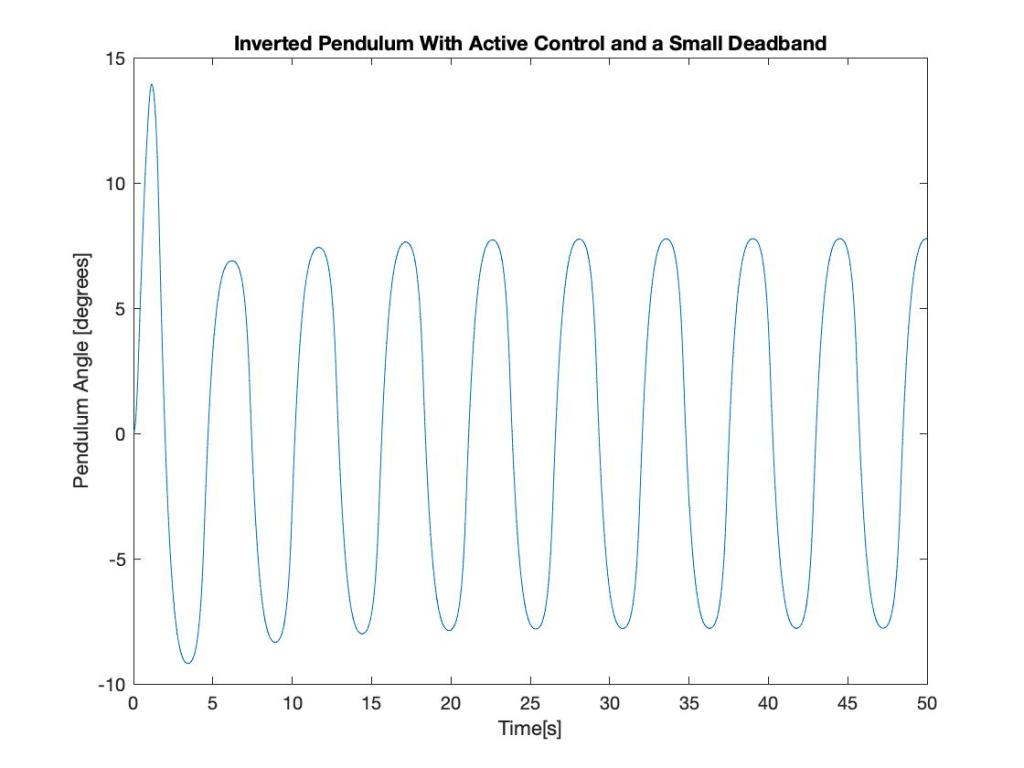

Figure 3: Pendulum Response with a small deadband

In this case, there is some ambiguity in the system that is modeled as a dead band. Until the pendulum moves a large enough amount one way or the other, the measurement system doesn’t detect the motion. Because of this lack of detected motion, the control system does not provide any corrections to the system. The result is that the system oscillates more and to a larger degree. Similar to the lessons from my boss years ago, this is an example in a physical system of the real impact of ambiguous data. The more ambiguity, the more pronounced the negative impact is. The next picture extends the analogy even further. This example adds a delay in applying the control action.

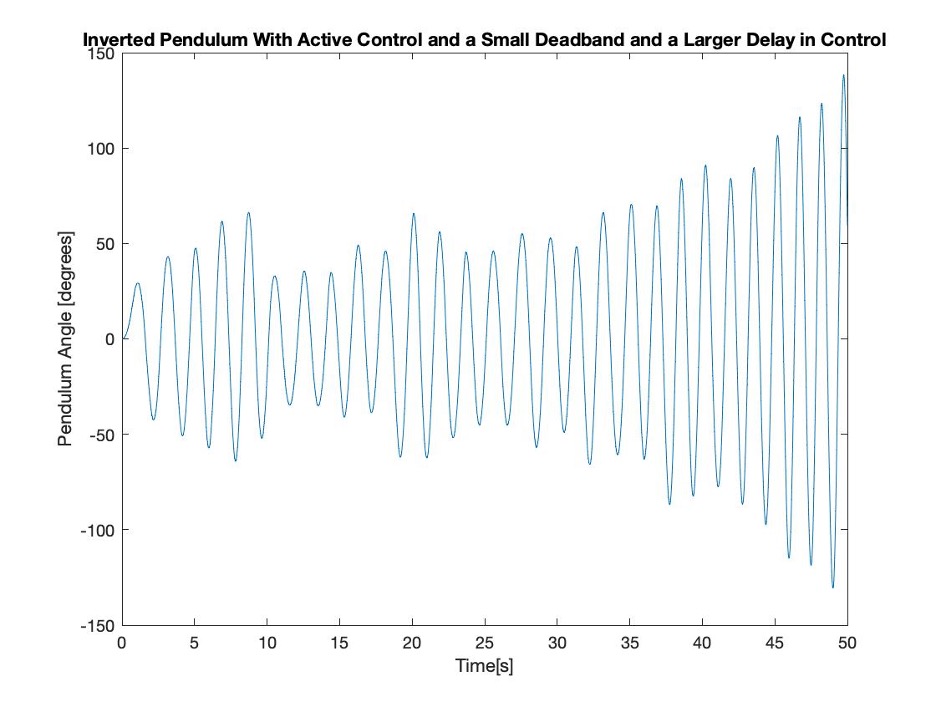

Figure 4: Pendulum with a Deadband and a Large Delay in Control

In this case, the deadband and the large delay in control have resulted in what was a stabilized system being unstable. From the perspective of the organizational dynamics that my boss described years ago, this system is an example of the negative behaviors that can occur in systems with ambiguous performance measurements and systems that are slow to respond.

In another industry, but in a similar vein, Henry Petroski observed the tendency for failure to occur in a somewhat cyclic manner. Across industries, there are examples of people trying to extrapolate from a working system. Typically, they do it by degree. Because it is by degree, the feedback is often ambiguous until it is catastrophically not ambiguous.

Personally, I have come to the conclusion that the cyclic nature of failures in many industries is not a comment on the systems in those industries. Instead, I believe the cyclic nature of failures in many industries is often a result of the lack of consistent unambiguous feedback. In the absence of this feedback, humans can build narratives around the ambiguous behavior. Authors including Nassim Taleb, Jim Collins, John Kotter, and Henry Petroski have all commented on various facets of this very human behavior. But, to me, the lessons are to seek to make feedback clear and timely. And industries or systems where the feedback at the top level is unavoidably ambiguous and slow, I believe it is imperative for the organizational system design to be built in a way that seeks to increase the observability and controllability of the system.

Leave a comment